Cortex 2.0 Multi-Gripper Generalization

Full-stack automation means solving the hard problems end-to-end. The real world rarely hands you a clean problem with a single robot and a single gripper. It hands you a fashion fulfillment center with pick stations handled by single arms, returns stations requiring dual arms for whatever comes back through the network, and humanoids loading carts on the shop floor. Running one model across all of it is what Cortex has been built for from day one.

To achieve true robotics generalization, intelligence needs the right tools to act. The industry likes to celebrate hardware breakthroughs in isolation. We see them as a means to an end. A gripper alone isn't a solution, it's a component. The interesting work is integrating them into a system that actually performs: perception, planning, recovery, knowing when to commit and when to recover. Without a model that already knows how to use any tool you put in front of it, every new piece of hardware turns into another integration project, and the value of better fingertips gets eaten by the cost of teaching the rest of the system how to use them. Object distributions don't sit still. Returns stations see thousands of SKUs nobody planned for. The model running in production has to generalize across all of it. Across robots, across grippers, across the long tail of the catalog.

The gripper changes, the model doesn't.

We recently integrated a new end-effector built for footwear. Cortex picked it up without retraining. A small dataset of teleoperated demonstrations was enough to ground the new motions; the rest of the model carried over unchanged. That single integration expanded the items our fleet handles reliably by an order of magnitude, and unlocked the full range of fashion items.

Shoeboxes make out a large ratio of fashion customers warehouses. A humanoid loading and unloading carts on a shop floor. A dual-arm returns station handling boxes that have already been opened, repacked, and reshaped by the journey through the network. A single-arm picking cell with a custom end-effector built specifically for shoeboxes, fast, robust, and capable of moving thousands of units per shift without a missed beat.

How Cortex 2.0 picks up a new gripper

Cortex 2.0 treats every gripper as a first-class entity. Each gripper has its own learned dynamic embedding in a shared embedding table, and that embedding is injected into the world model, the low-level VLM, and the VLA action heads. As planning happens in visual latent space with pixels carrying regularities about objects, contact, and motion it can transfer across embodiments, conditioning the model on which hand it's wearing at every layer where that information matters. From different candidate futures that the work model rolls out, PRO scores each for progress, risk, and stability. Everything conditioned on the active gripper.

The action decoder produces the control representation. Two thin output heads on top produce the arm command and the end-effector command.

Because the embedding and most of the decoder are shared across modules and grippers, swapping hardware does not require retraining the planner. Integrating a new gripper means adding a row to the embedding table, collecting a small targeted dataset of teleoperated demonstrations to ground it, and letting the gripper head learn the new actuator's specific behavior. Everything else comes along unchanged.

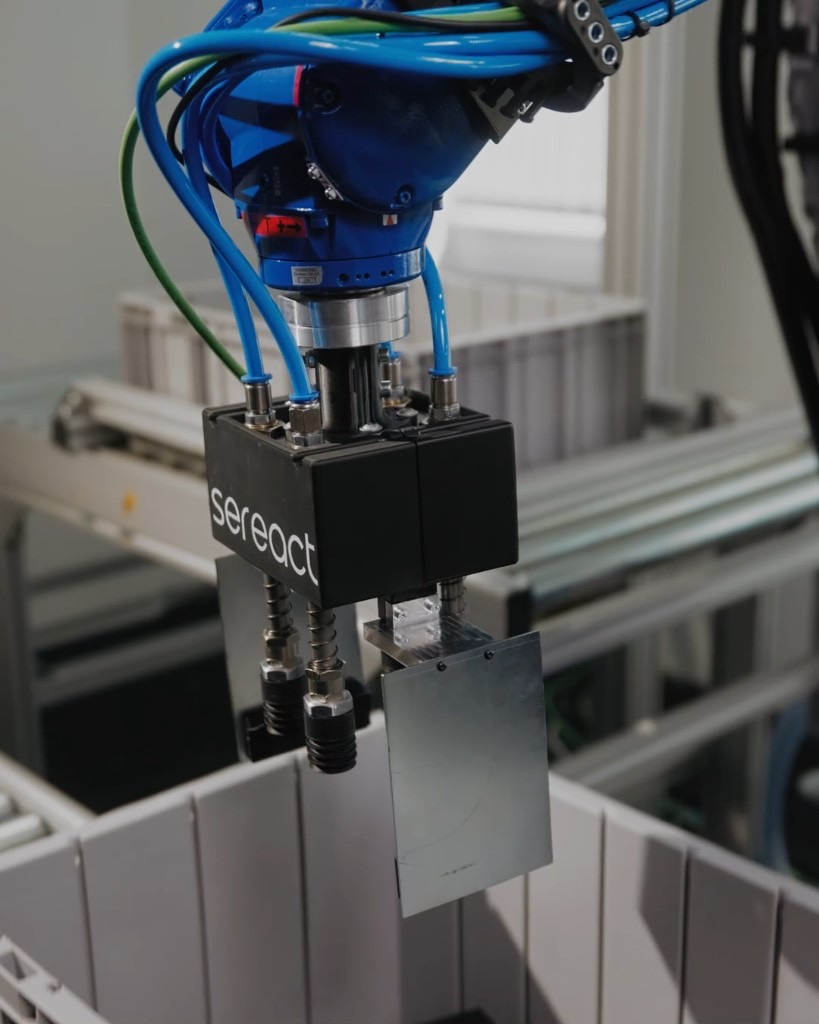

The Shoebox Gripper - Our single-arm end-effector built from the ground up for shoeboxes.

Shoeboxes are deceptively hard. They're rigid enough to reward a confident grasp and fragile enough to punish a wrong one. They stack tightly. They come in a dozen sizes from the same brand and a hundred sizes across a fashion catalog. They arrive with lids loose, with corners crushed, with shrink wrap half-on.

Our shoebox gripper is purpose-built for that category. The geometry is tuned to the standard shoebox footprint range, with compliance in the contact surfaces to absorb the variation. It commits in a single motion, no regrasp, no probing.

Where the shoebox gripper performs best:

- High-throughput picking cells with sustained shifts at peak rate, where every second of cycle time matters.

- Outbound fulfillment for footwear retailers placement quality and orientation matter for downstream packing.

Cortex picked up the shoebox gripper the way it picks up any new hand: a small set of teleoperated demonstrations, no retraining of the planner, deployed on a customer site within weeks of the hardware being ready.

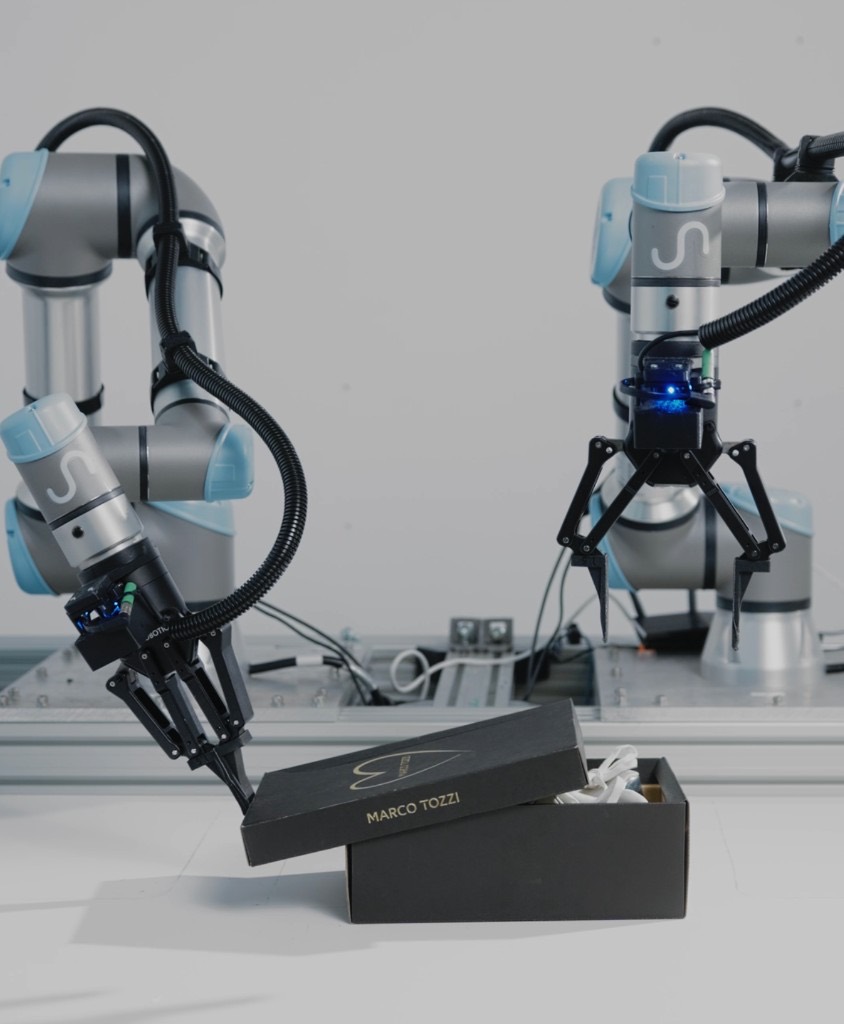

Two-finger gripper

The two-finger gripper is the workhorse. Simple mechanism, robust, fast, easy to maintain. Two opposing jaws, configurable tip geometry, a force range that covers most rigid and semi-rigid items in a fashion or e-commerce catalog.

Where it performs best:

- Returns station

- Boxed goods of varied sizes

- Items that need a confident pinch

Humanoid hand

The humanoid hand is the most general end-effector we run, and the one with the highest dexterity ceiling. Five fingers, a thumb that opposes, a workspace that overlaps with what humans use. It's the gripper of choice for environments designed around people. Shop floors, warehouses with human-scale shelving, returns stations that need to handle whatever a customer sent back.

Where it performs best:

- Loading and unloading carts and totes

- Mixed-object handling

- Soft and irregular goods

Suction gripper

Suction is the fastest grasp we have when the geometry cooperates. A flat or near-flat surface, a clean seal, a single vertical pic. Cortex runs it the same way it runs everything else: as one option among several, picked when the scene calls for it.

Where it performs best:

- Polybag picking

- Flat-topped boxes and cartons

- High-throughput single-arm cells

Beyond the warehouse

Fashion fulfillment is the immediate pull. Footwear, apparel, and returns are categories where shoebox handling, garment manipulation, and high-mix SKU diversity are the daily reality, and where unit economics depend on hitting peak throughput without compromising on placement quality. The same Cortex deployment that runs returns at one customer can move to a fashion fulfillment center and start picking shoeboxes the next week, on hardware that did not exist in the training set a month earlier.

The same logic extends further. Manufacturing cells need different end-effectors for different parts, and the model running across them needs to know when to switch and how. Household environments, the next frontier for physical AI, will require an even broader range of tools, because no single hand will ever cover the diversity of objects in a real home. The robotics companies that win in those environments will be the ones whose models do not care which hand they're wearing, and whose hardware roadmap is driven by what the intelligence layer needs next rather than the other way around.

Every new gripper we integrate makes the next one faster. Every shoebox picked, on any robot, makes the next pick on any other robot more reliable. Hardware is the infrastructure. Intelligence is the leverage. We've built a system where adding more of the first compounds the value of the second, and we're shipping that system today across more than 200 deployments in production.

Article Resources

Access content and assets from this post

Text Content

Copy the full article text to clipboard for easy reading or sharing.

Visual Assets

Download all high-resolution images used in this article as a ZIP file.

Latest Articles